When you build and configure a new SQL Server, one of the many things you should be doing is testing how it performs before it goes live. If you don’t do that, then you probably should have a hard think about doing so. It’s important the performance of the new server is tested to ensure it’s running sweetly before you go live, rather than finding out something is awry after you go live. I’ll explain a few types of tests you can do.

Basic Tests

There are two tests that I would start with for a new server. They require restoring a test database to both the new server and the old server it’s replacing. If it’s not replacing a server, then restore to a server that is closest to what the new server is.

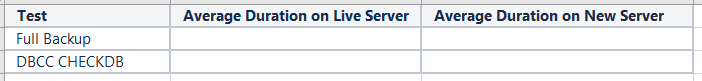

- Backup test. This is where you run a backup of the test database on both old and new servers and time it. Use the same command for each database. Easiest way to time it is to run the backup command in an SSMS window and when it’s completed, check the duration at the bottom right of the query window.

- Integrity check test. On the same test databases, run a DBCC CHECKDB and time it in the same way as the previous test.

Compare the times. Whack the results in Excel for comparison. If the new server is significantly slower, then you might have a problem and need to look into what might be the problem. If it’s significantly faster, and the server hardware and resources are all the same, then you probably have done something wrong in the test. These tests aren’t perfect, but together, they test a lot and quickly point out if you have any problems with the new server.

Try and keep the tests the same, like running it on the live server when it’s not busy, since the new server wont be busy. If it’s sharing the same shared folder to backup to, then don’t run the tests at the same time, or they’ll affect each other.

IO Testing

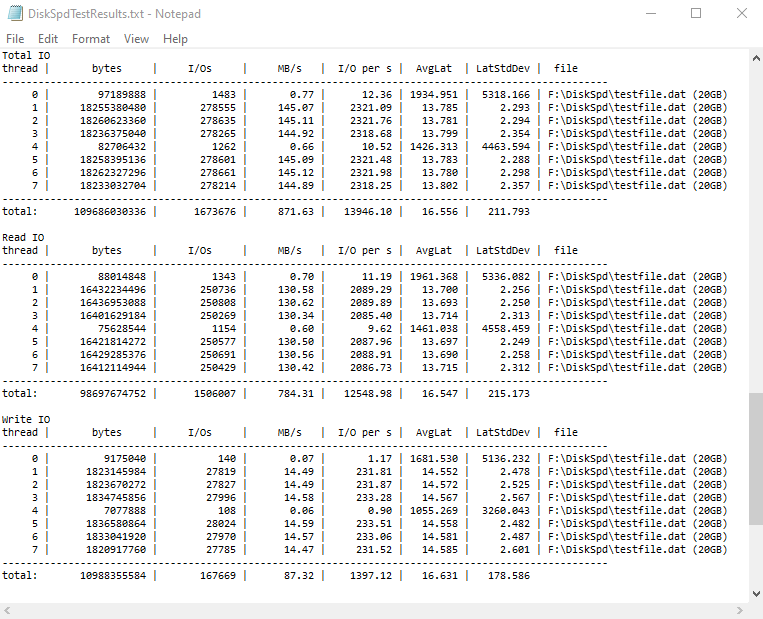

The other test I always use on a new server is to run an IO test. My favorite IO testing tool is DiskSpd. Pick a drive on both the old and new servers that’s used for the same purpose, e.g. your database data files, and run the test on them. It’ll output the IOPS and latency performance results.

This tool is great and easy to use, but has many different settings which can get overwhelming. The tool basically hammers the drive and reports back on the performance. Although you probably want to try replicate the workload that your application does, to start you off you can run this command that is close to a SQL Server workload:

DiskSpd.exe -c20G -d120 -r -w10 -t8 -o32 -b64K -h -L F:\DiskSpd\testfile.dat > DiskSpdTestResults.txt

Here is an example of the results:

Testing with a Mock Workload

If you feel the need to, you can create a mock workload. You can create a mock database with a mock table with mock data and you can be creative and test anything you want.

An example of what you could test is create a table with millions of rows of mock data and test the performance for seeks and scans in both low-fragmented and high-fragmented indexes , deletions, updates and inserts and index rebuilds.

This provides more specific statistics on how the new server performs with different operations which could then give insight into what type of operations of your application will improve or deteriorate once migrated to the new server.

Alternatively there is an open source performance testing tool already out there – HammerDB. This will save you some time, but give you less flexibility.

This type of test though really is mostly testing what you’ve already tested in the basic tests. So if you want that little bit extra statistics to analyse, then the extra time and extra effort put into this type of test could be worth it. Otherwise it isn’t really helpful.

Testing with a Real Application Workload

This is a really complicated and time consuming type of test. To do this you would restore a copy of a production database, or at least a table with the same and same indexes. Then you would run some queries and some insert/update/delete commands to test and time them and maybe analyse the execution plans. It sounds simple enough, but it really isn’t simple at all.

You would need to ensure that every test command starts with the data at the same state (no data has changed). After all, every insert/update/delete affects the data so the same test can’t be run twice. I mean, you can only delete the same row once and every insert affects the next test run. So be prepared to restore the backup multiple times.

If you wanted to ramp up the test to replicate your usual transaction load, you can’t just have hundreds of connections all running the same query at once. You will need to consider the effects of the queries and whether that happens in the live system or not. The same select statement could be run at the same time across hundreds of sessions, but that could just produce blocking and this isn’t what usually happens in production. So you would need the pick all the queries carefully, otherwise it’s worthless.

You could also grab the top 3-5 worst performing queries from your monitoring system and run them on your testing database. But the next time you build and test a server, that worst performing query may no longer be performing poorly. Maybe it was fixed with a new index? So every new server build would require new queries to test with. More hard work.

Best option?

You may think that the basic tests are… too basic and that you need something better or more complex which does harder testing. Together, these basic tests are testing CPU, memory and IO so do a very good job of testing. It’s best to start simple. The basic tests will tell you mostly what you want to know. If you still want more, then you can start delving into the more advanced tests. But just consider how much extra effort you will put and the benefits you get out of it of doing those extra tests.